We use the words mistake, lapse, and slip as if they are the same thing. Someone misses a deadline — “my mistake.” Someone forgets to send a critical file — “just a slip.” Someone follows the wrong process entirely — “a simple lapse in judgment.” The words get shuffled around interchangeably in post-mortems, apology emails, and performance reviews.

But they are not the same thing. Not even close.

They come from entirely different places in the human mind. They are triggered by entirely different conditions. And crucially — they demand entirely different fixes. When organisations treat all three as one generic “human error” problem, they end up applying the wrong solution to the wrong problem, repeatedly wondering why their training programmes, checklists, and reminders aren’t working.

Here is what the science actually says — and what you can do about it.

The Framework: Where These Ideas Come From

This distinction was rigorously developed by cognitive psychologist James Reason, whose work on human error — particularly in high-risk industries like aviation, nuclear power, and healthcare — remains foundational decades after its publication. Reason categorised human failures into skill-based slips and lapses, and knowledge- or rule-based mistakes, arguing that each operates at a fundamentally different level of conscious processing.

More recently, practitioners like John Evans, in his Error Risk Reduction reference manual, have translated this framework into practical tools that organisations can actually use. Evans’ work is particularly valuable because it moves the conversation away from blaming individuals and toward designing systems that account for the reality of how human beings work under real conditions — distracted, tired, time-pressured, and running on autopilot more often than any of us would like to admit.

The core insight: most errors are not caused by ignorance, carelessness, or bad character. They are caused by the perfectly normal mechanics of the human brain doing exactly what it evolved to do.

What Is a Mistake?

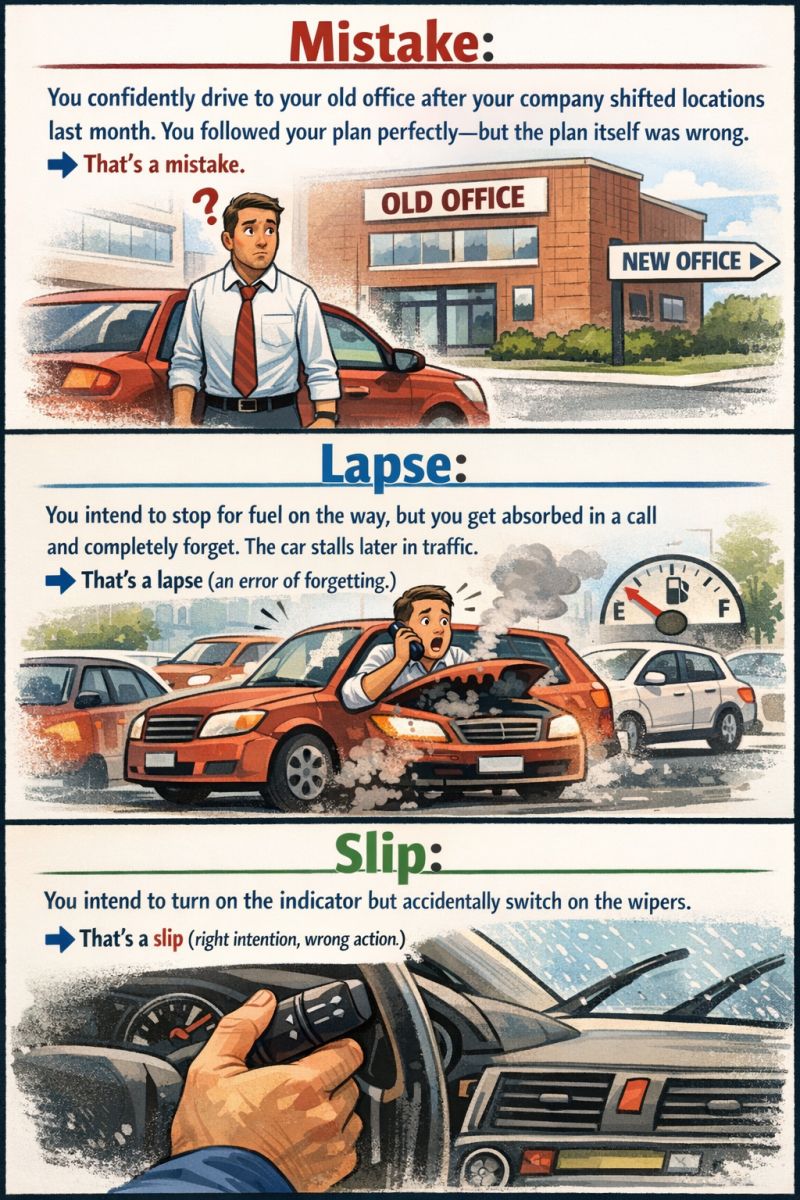

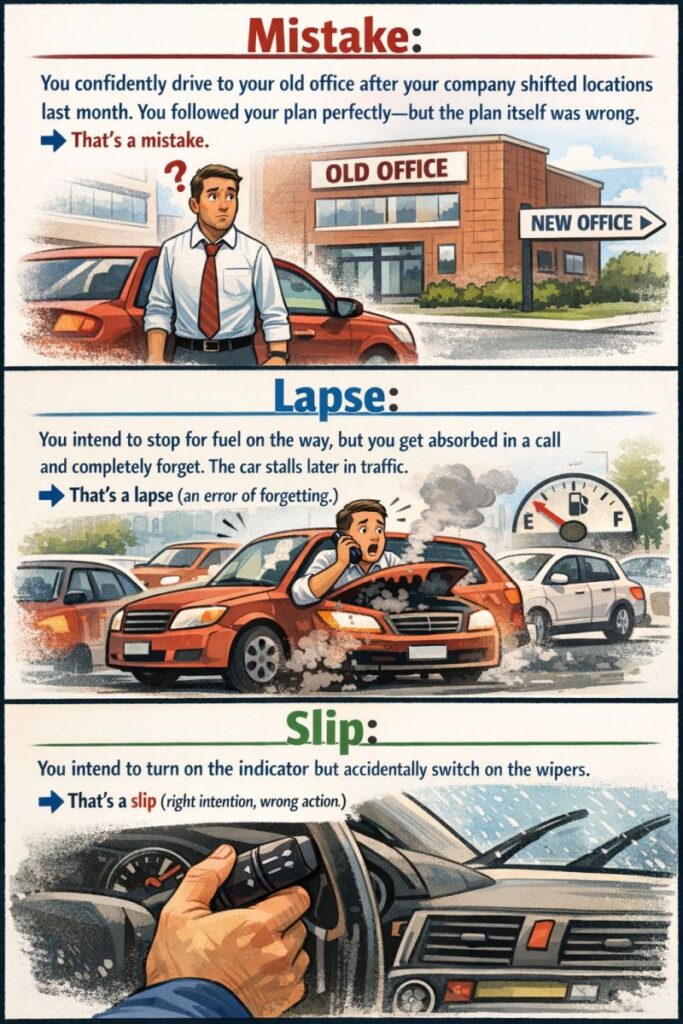

You confidently drive to your old office after your company shifted locations last month. You followed your plan perfectly — but the plan itself was wrong.

A mistake is a failure of intention — the wrong plan, the wrong goal, or the wrong understanding of a situation. The person executing the mistake may be performing flawlessly. They are competent, attentive, and doing exactly what they decided to do. The problem is that what they decided to do is incorrect.

Mistakes live at the level of planning and knowledge. They happen when:

- The wrong rule is applied to a situation (“I know how to handle this — I’ve seen it before” — but the situation is subtly different this time)

- Knowledge gaps exist — the person genuinely doesn’t have the correct information to make the right call

- Outdated mental models persist — the world has changed, but the internal map hasn’t updated

In the driving analogy, the driver’s execution was perfect. Smooth gear changes, appropriate speed, correct lane discipline. The failure wasn’t in the doing. It was in the deciding. No amount of driving skill could correct it once the wrong destination was locked in.

Why Mistakes Are Particularly Dangerous

Mistakes are especially insidious because they feel right. The person committing a mistake typically experiences no internal signal that anything is wrong. There is no friction, no moment of hesitation, no nagging sense of unease. Confidence is often high. This is why experienced professionals can be just as vulnerable to mistakes as novices — sometimes more so, because experience brings with it a library of “this is just like last time” pattern-matching that can override careful analysis when it shouldn’t.

How to Fix Mistakes

Because mistakes arise from wrong knowledge, wrong rules, or wrong models, the fixes must target those roots:

- Training and education — ensuring people have accurate, up-to-date information about how processes, systems, and environments actually work today, not how they worked two years ago

- Decision support tools — checklists, decision trees, and protocols that force structured thinking in high-stakes or novel situations rather than relying on memory and pattern recognition

- Scenario-based learning — presenting people with cases where their instinctive response would be wrong, building the habit of questioning the plan before executing it

- Regular model updates — in organisations that change frequently, actively communicating what has changed and why, rather than assuming people will update their mental models passively

- Psychological safety for challenge — creating cultures where people can ask “are we sure about this?” without social penalty, catching mistaken plans before they are executed

What Is a Lapse?

You intend to stop for fuel on the way, but you get absorbed in a call and completely forget. The car stalls later in traffic.

A lapse is a failure of memory — the right plan existed, but the person failed to execute a step due to forgetting, distraction, or disruption of the intended sequence. The goal was correct. The knowledge was there. The intention was genuine. But somewhere between deciding and doing, the action fell through the gap.

Lapses operate at the level of memory and attention. They happen when:

- Working memory is overloaded — too many competing demands, and something gets dropped

- Interruptions break the chain — a well-practised sequence is interrupted and the person re-joins at the wrong point, skipping a step

- Habitual patterns override the planned deviation — you fully intend to take a different route today, but habit steers you on autopilot down the usual one

- Time pressure compresses attention — items that feel “lower priority in this moment” are mentally deferred and then never recalled

The fuel-stop story captures it perfectly. Stopping for fuel was the plan. The driver knew it was necessary. The intention was real. But a phone call — an interruption — shifted the cognitive load, and the memory of the refuelling intention simply wasn’t retrieved when it needed to be.

Why Lapses Are Underestimated

Research consistently shows that lapses, alongside slips, account for the vast majority of errors in skilled, routine work — with estimates suggesting around 85% of workplace errors arise from slips and lapses rather than mistakes. This is a striking figure, and it carries a striking implication: most errors are happening not because people don’t know their jobs, but because they do know their jobs and are doing them largely automatically — which is exactly when lapses strike.

Organisations often invest heavily in training and knowledge transfer to address perceived “mistake problems,” when the actual failure pattern in their environment is dominated by lapses and slips. The investment goes to the wrong place, and the error rate doesn’t fall.

How to Fix Lapses

Because lapses arise from memory failure and attentional disruption, the fixes must create external memory systems and attentional supports:

- Checklists — not as bureaucratic box-ticking, but as genuine memory offloading devices. The checklist does not assume people don’t know the steps; it assumes human memory is unreliable under load and compensates for that

- Reminders and prompts at the point of action — alerts, notifications, and environmental cues placed at the moment and location where the action needs to happen

- Interruption management protocols — procedures that require people to explicitly mark their place in a sequence before attending to an interruption (“I’m at step 4, responding to interruption, returning to step 4”)

- Reduced cognitive load through simplification — fewer concurrent demands where critical tasks are performed

- Buddy systems and read-back verification — particularly in high-stakes environments, having a second person verify that all intended steps were completed

What Is a Slip?

You intend to turn on the indicator but accidentally switch on the wipers.

A slip is a failure of execution — the right plan, the right intention, but the wrong physical or verbal action performed. Something else happened in place of what was meant. The goal was correct. The person was trying to do the right thing. But the action that came out wasn’t the action that was intended.

Slips operate at the level of execution and physical action. They happen when:

- Similar actions are stored near each other in physical or procedural space — reaching for one control and activating another beside it

- Highly practised, automatic routines capture control — doing the “usual” thing when you meant to do something slightly different

- Fine motor actions are executed in a degraded state — tiredness, stress, or distraction degrades the precision of physical action

- Interface design creates ambiguity — controls that look alike, feel alike, or sit close together invite the wrong one to be activated

The wiper/indicator example is classic. Both controls are stalks on a steering column. They live next to each other. The motion to activate them is similar. Reach for one when tired, rushed, or cognitively loaded — and the other one comes on. The driver didn’t want wipers. There was no rain. The intention was entirely correct. The execution just misfired.

Why Slips Catch Us Off Guard

Slips feel embarrassing precisely because they seem avoidable. “I know how to use my own car.” “I’ve done this a thousand times.” And that’s exactly the point — slips are most common in highly practised, automatic behaviours. The very fluency that makes skilled performance efficient also makes it vulnerable to execution errors. Automaticity saves cognitive resource but sacrifices fine-grained conscious monitoring of each individual action.

In complex work environments — operating theatres, control rooms, cockpits, manufacturing floors — the physical and procedural landscape is dense with similar-looking, similar-feeling controls and sequences. Slips are not a failure of skill. They are an entirely predictable consequence of human motor and attentional systems operating in environments they weren’t designed for.

How to Fix Slips

Because slips arise from execution failures in automatic action, the fixes must target the environment and the interface, not the person:

- Error-tolerant design — make the right action easy and the wrong action hard or impossible. Physical guards, confirmation steps, and irreversibility controls all fall here

- Differentiation — ensuring that similar controls are not adjacent, look different, feel different, or require different gestures to activate

- Forcing functions — system or physical designs that make it impossible to proceed without completing the correct prior action

- Standardisation of layouts — reducing the number of times a person must override an expected muscle-memory response by making environments consistent

- Slowing down for high-stakes moments — building deliberate pauses into critical action sequences, interrupting automaticity just long enough for conscious verification

The Critical Implication: Systems, Not People

Here is what all three of these categories share, despite their differences: none of them are fundamentally about bad people.

Mistakes happen because humans work from mental models that are necessarily incomplete and sometimes outdated. Lapses happen because human memory is not a hard drive — it is reconstructive, interference-prone, and load-sensitive. Slips happen because human action systems are optimised for speed and efficiency through automation, which comes with a vulnerability to execution error.

None of these are character flaws. None of them are solved by working harder, being more careful, or “paying attention.” The exhortation to “be more careful” is perhaps the most common — and most useless — response to human error in organisations. It addresses none of the actual mechanisms. It places the burden of compensating for normal human limitations entirely on the individual, in an environment that was often designed without those limitations in mind.

The alternative is to design systems simple enough for humans — not systems that expect flawless performance from people operating in complex, high-pressure, interruption-rich environments.

This means:

- Auditing your environment through the lens of mistake, lapse, and slip risk — where in your processes is each type of error most likely to occur?

- Designing defences that match the error type — training and decision tools for mistakes, memory aids and interruption protocols for lapses, interface design and forcing functions for slips

- Measuring errors by type — when something goes wrong, the investigation should not only ask what happened but what type of error it was, so the right countermeasure can be applied

- Shifting the cultural narrative — from “who failed?” to “what conditions produced this failure, and how do we change those conditions?”

Putting It Together: A Quick Reference

| Error Type | What Fails | Conscious Control | Cause | Fix |

|---|---|---|---|---|

| Mistake | The plan or intention | High (deliberate) | Wrong knowledge, wrong rule, outdated model | Training, decision tools, updated procedures |

| Lapse | Memory of the intention | Medium (semi-deliberate) | Forgetting, distraction, interruption | Checklists, reminders, interruption protocols |

| Slip | Physical execution of the intention | Low (automatic) | Automaticity, similar controls, fatigue | Interface design, forcing functions, standardisation |

Final Thought

Most errors are not committed by careless people. Most errors are committed by skilled, competent, well-intentioned people — operating in systems that make mistakes easy to make, lapses easy to occur, and slips easy to execute.

The goal of error reduction is not to find better humans. It is to build better systems.

And that starts with understanding precisely what type of error you are dealing with — because the right answer to the wrong question doesn’t help anyone.

This perspective draws on the foundational work of James Reason in human error theory and the practical frameworks developed by John Evans in his Error Risk Reduction reference manual — one of the most clear-eyed and practically useful guides to understanding human error in real working environments.